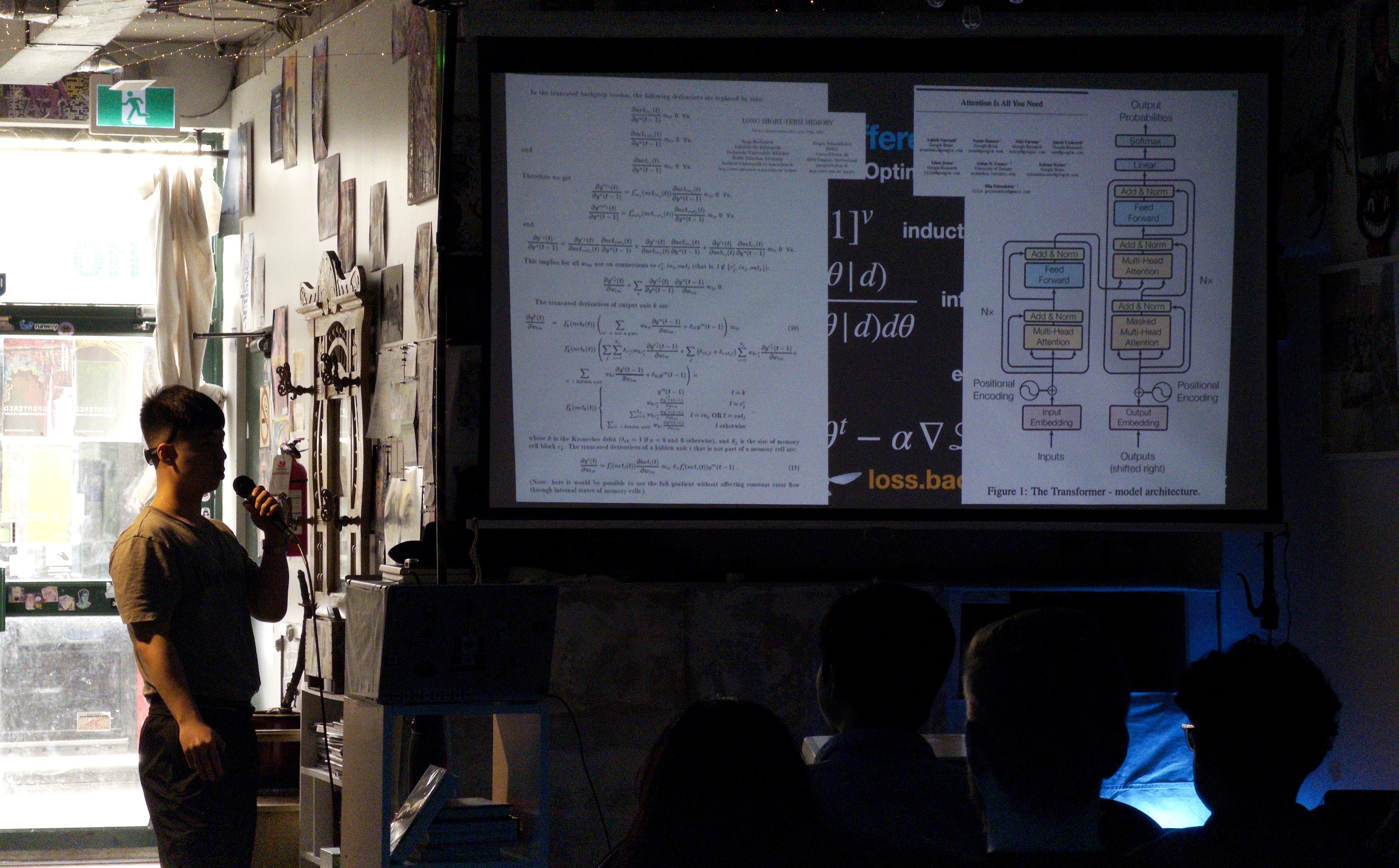

Myself, presenting an early outline of SITP at Toronto School of Foundation Modeling Season 1

Myself, presenting an early outline of SITP at Toronto School of Foundation Modeling Season 1

Preface

This book is aspirationally titled The Structure and Interpretation of Tensor Programs, (abbreviated as SITP) as it's goal is to serve the same role for software 2.0 as The Structure and Interpretation of Computer Programs (abbreviated as SICP) did for software 1.0. Written by Harold Abelson and Gerald Sussman with Julie Sussman, SICP has reached consensus amongst many to be integral to the programmer canon, providing an introductory whirlwind tour on the essence of computation through a logically unbroken yet informal sequence from programming, to programming languagesSICP influenced other texts such as it's dual HtDP (introduced at Waterloo by Prabhakar Ragde) it's typed counterpart OCEB, and the recent addition of DCIC spawning from it's phylogenetic cousin PAPL.. The primary reason why this format is loved by many (and to be fair, equally disliked by a few), is because of the somewhat challenging learning curve for beginners, as it's not a book which simply teaches syntax such as the many "Learn X in Y Minute" booksWhich at this point LLM's adequately replace., but rather the semantics of a programming with programming languagesThe difficulty of teaching has always been semantics: Matthias Felleisen's TeachScheme!, Shriram Krishnamurthi's Standard Model of Programming Languages, Will Crichton's Profiling Programming Language Learning (something on SITP's roadmap)..

Before the success of large

language modelsnotably the supervised finetuning and reinforcement learning from human feedback on top of a pretrainted transformer,

the pedagogical return on investment in an introductory book on artificial intelligence following the same form as SICP was low,

as readers would build their own pytorch from scratch "just" to classify MNIST or ImageNet.

However now that deep learning systems are becoming as important if not more than the

models themselves

especially in the period of research in artificial intelligence dubbed the era of scaling, characterized by the heavy engineering of pouring internet-scale data into the weights of transformer neural networks with massively parallel and distributed compute.,

that return on investment is higher,

as the frontier of deep learning systems increasingly becomes ever more out of reach from the grasp of the

beginnerthe massively parallel processors now have dedicated hardware units evaluating matrix instructions called tensor cores, which in turn have precipitated the need for fusion compilers. Even language-runtime codesign/cooptimization like profile-guided optimizations are repeating themselves with languages such as torch.compile() and runtimes like vllm/sglang..

At least this is how I personally felt as a professional engineer transitioning to the world of domain specific tensor compilers,

coming from domain specific cloud compilers and distributed infrastructure provisioners.

While I enjoyed reading existing deep learning canonsuch as [DFO20] for mathematics, [JWHTT23] for machine learning, [GBC16] for deep learning, [HKH22] for parallel programming, I couldn't help but imagine how delightful a SICP-style top-down-just-in-time reading experience would be. If product management and engineering transitions into vibecoding and training models respectively, then why aren't we teaching the parallel programming of deep nets to highschoolers and first year college students from the get-go? Curiosity got the best of me, and what resulted therein is the book you hold on your screens.

So in part one of the book,

you will train your generalized linear models with numpy

and then start developing teenygrad by implementing your own multidimensional array abstraction

to train those models once again.

Then, in part two of the book, you will train deep neural networks with pytorch following the age of research and then update

the implementation of teenygrad to support gpu-accelerated "eager mode" evaluation for neural network primitives for both forward and backward passes.

Finally, in part three

which if you are more experienced, you may benefit in jumping straight to, to better understand what something like torch.compile() is doing for you of the book,

you will update teenygrad for the last time for the age of scaling by developing a "graph mode" compilation and inference engine with tinygrad's RISCy IR,

borrowing ideas from ThunderKitten's tile registers, MegaKernels, and Halide/TVM schedules. To continue deeping your knowledge, more resources are provided in the afterword.

The book provides a single resource with code, math, and expositioninspired by pedagogy such as Dive into Deep Learning (Zhang, Lipton, Li and Smola) and Distill (Carter and Olah) for deep learning systems such as pytorch and jax, while also embedding visualizers, explainers, and lectures from other open source educatorsfrom Andrej Karpathy, Grant Sanderson, Stephen Welch, Artem Kirsanov, and so on. to provide a rich multimodal dynamic documentas explored by Crichton with research in technical foundations of technical communication experience for the reader. While the SITP book and the teenygrad codebase is licensed under the MIT License, such embedded content remains the property of its original creators and licensors and is not claimed as original work of this project nor released under the MIT License.

If you empathize with some of my frustrations, you may benefit from the book too.

If you are looking for reading groups checkout the #teenygrad channel in

Good luck on your journey.

Are you ready to begin?